Video Question Generation via Semantic Rich Cross-Modal Self-Attention Networks Learning

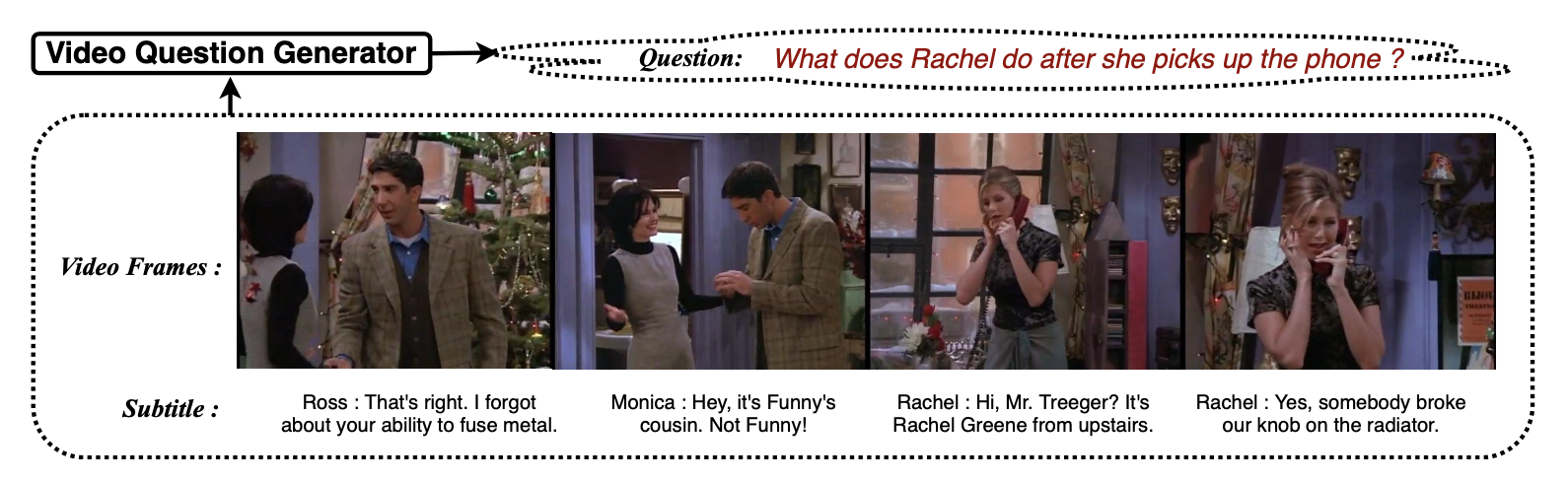

We introduce a novel task, Video Question Generation (Video QG). A Video QG model automatically generates questions given a video clip and its corresponding dialogues. Video QG requires a range of skills – sentence comprehension, temporal relation, the interplay between vision and language, and the ability to ask meaningful questions. To address this, we propose a novel semantic rich cross-modal self-attention (SR-CMSA) network to aggregate the multi-modal and diverse features. To be more precise, we enhance the video frames semantic by integrating the object-level information, and we jointly consider the cross-modal attention for the video question generation task. Excitingly, our proposed model remarkably improves the baseline from 7.58 to 14.48 in the BLEU-4 score on the TVQA dataset. Most of all, we arguably pave a novel path toward understanding the challenging video input and we provide detailed analysis in terms of diversity, which ushers the avenues for future investigations.