Audio Feature Generation for Missing Modality Problem in Video Action Recognition

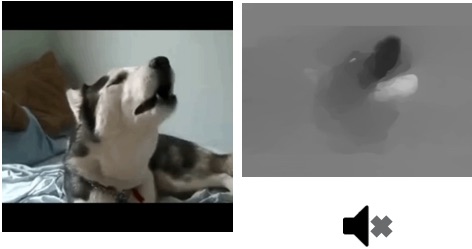

Despite the recent success of multi-modal action recognition in videos, in reality, we usually confront the situation that some data are not available beforehand, especially for multi-modal data. For example, while vision and audio data are required to address the multi-modal action recognition, audio tracks in videos are easily lost due to the broken files or the limitation of devices. To cope with this sound-missing problem, we present an approach to simulating deep audio feature from merely spatial-temporal vision data. We demonstrate that adding the simulating sound feature can significantly assist the multi-modal action recognition task. Evaluating our method on the Moments in Time (MIT) Dataset , we show that our proposed method performs favorably against the two-stream architecture, enabling a richer understanding of multi-modal action recognition in video.